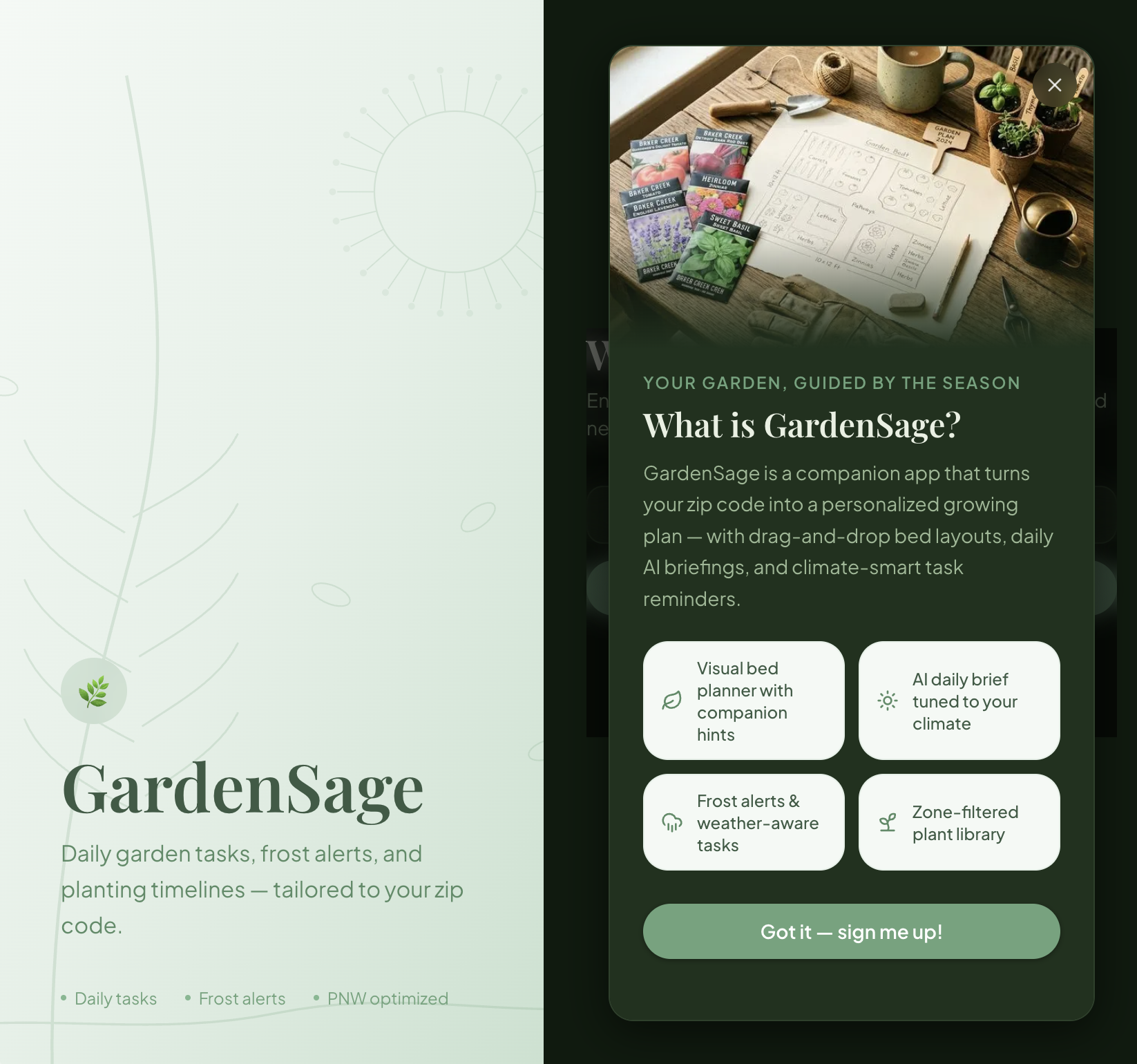

I was searching for an app idea to build when my sister handed me one during a weekend afternoon conversation. She has a green thumb and has been increasingly ambitious in her gardening endeavors. She spent an hour laying out every problem she faces when trying to manage her garden, and it became clear that individual LLM tools and chatbots were failing her most pressing needs.

The problems came in no particular order. Growing and maintaining plants requires understanding what each one needs, but she has no time to track it all. Every seed has different requirements. Some need light, others darkness. Some need a cold start in the fridge. Her region and climate matter, and conditions vary every year. She needs to consider weather, daily conditions, frost warnings, sun paths. Her current failure rate sits at 90 percent.

She searches for information but forgets it. She needs history. She needs the system to automatically surface suggestions on the best time to plant and when to apply preventative treatments. She wants negative reinforcement. Tell her gladiolas are the worst plant for her zip code and suggest thrips instead. Slugs are a problem, and she needs to apply preventative measures in February, not after they have already eaten everything.

She wants the app to consider her garden location and sun exposure. Give her a daily or weekly task list based on current plants and conditions. Retain basic facts about her location and always-need-to-do tasks. Do not refuse plants. Instead, give her ways to enable growth. Suggest companion planting. Allow her to define planting themes. Look at upcoming weather and give alerts. Cover your pansies. Frost is coming. Show what to expect each day as plants grow.

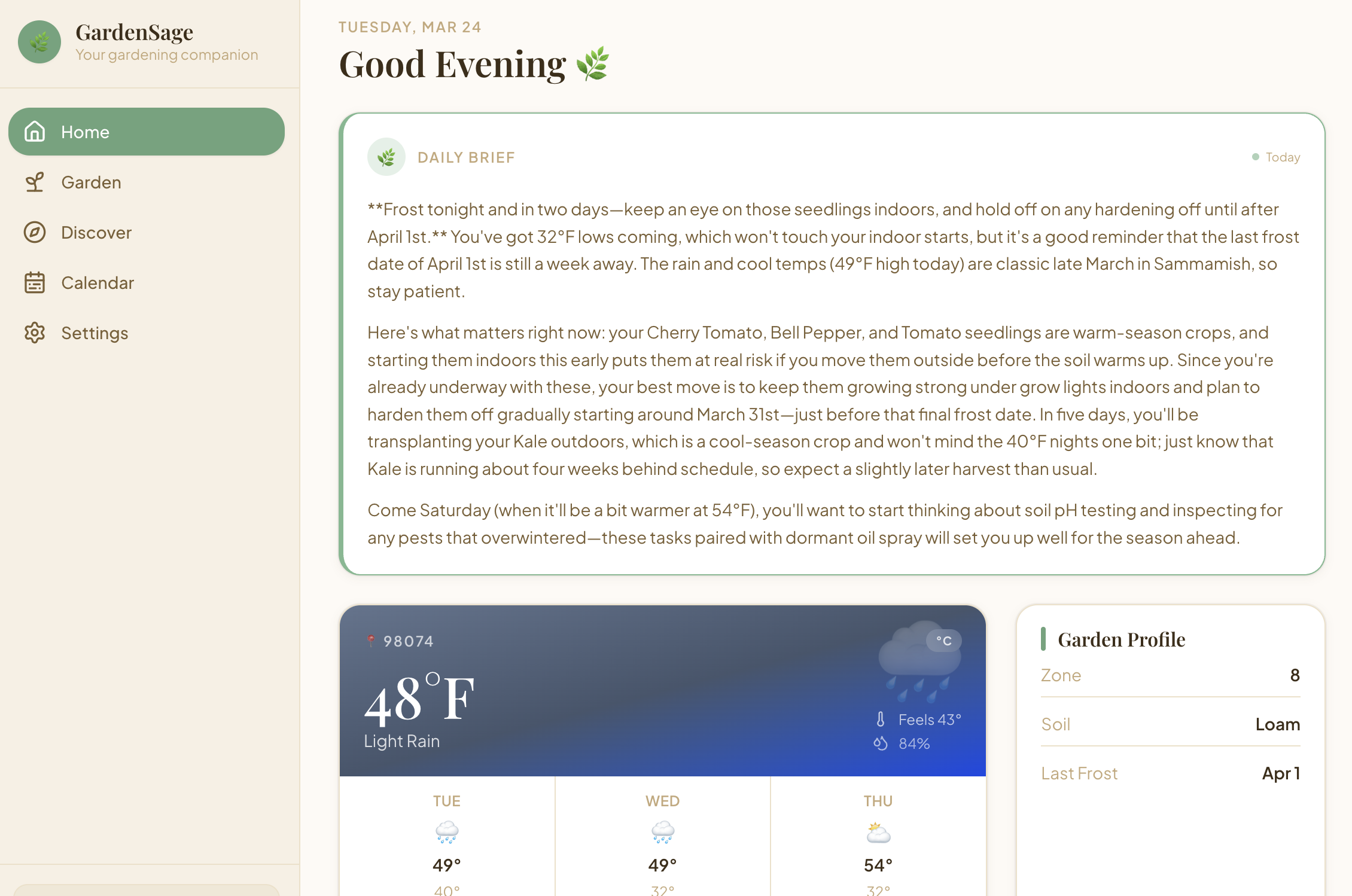

I set out to create this app for an audience of one. I also wanted to use this opportunity to finally build true LLM integration with properly obfuscated API keys using Vercel environment variables, true database integration using Supabase, and true email authentication using Supabase Auth and Resend.

The stack is Next.js 14 App Router, Supabase, Tailwind, shadcn/ui, and @dnd-kit for drag-and-drop garden bed layout. I chose Next.js App Router for its server component model. Most of GardenSage is read-heavy. Plant data, care schedules, weather. Keeping rendering on the server felt natural. Supabase handles authentication, the database, and row-level security. One platform for users, data, and access control without rolling custom auth. @dnd-kit powers the drag-and-drop garden bed canvas, which ended up being the most tactile part of the app.

I wrote the data model seed-first. SQL migrations for the plant catalog, companion relationships, and care schedules came before any React components. This meant the UI always had real data to render against, which caught edge cases early. The tradeoff is that migrating seed data, 138,000 plus lines of SQL, is clunky and hard to diff in review.

My sister now has an app that remembers her garden layout, tracks what she planted, and tells her when to cover her pansies before the frost hits. It solves problems for exactly one person, which makes it the most useful thing I have built in years.